SanDisk Extreme II Review (480GB, 240GB, 120GB)

by Anand Lal Shimpi on June 3, 2013 7:19 PM EST

A few weeks ago I mentioned on twitter that I had a new favorite SSD. This is that SSD, and surprisingly enough, it’s made by SanDisk.

The SanDisk part is very unexpected, because until now SanDisk hadn’t really put out a very impressive drive. Much like Samsung in the early days of SSDs, SanDisk is best known for its OEM efforts. The U100 and U110 are quite common in Ultrabooks, and more recently even Apple adopted SanDisk as a source for its notebooks. Low power consumption, competitive pricing and solid validation kept SanDisk in the good graces of the OEMs. Unfortunately, SanDisk did little to push the envelope on performance, and definitely did nothing to prioritize IO consistency. Until now.

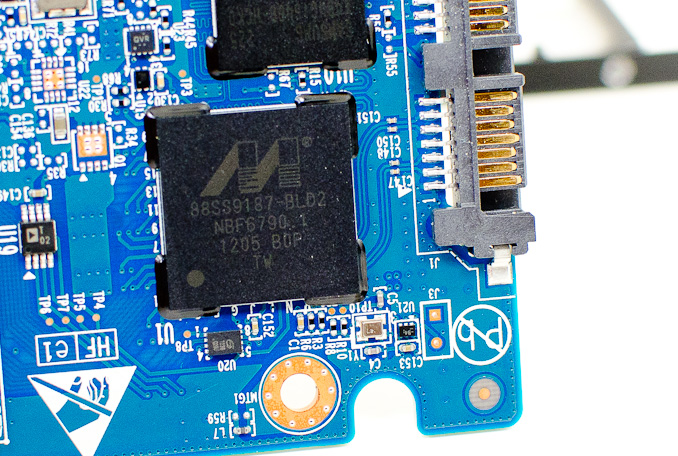

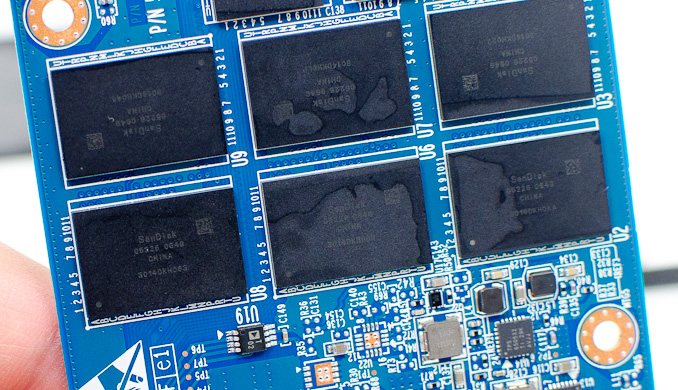

The previous generation SanDisk Extreme SSD used a SandForce controller, with largely unchanged firmware. This new drive however moves to a much more favorable combination for companies who have their own firmware development team. Like Crucial’s M500, the Extreme II uses Marvell’s 88SS9187 (codename Monet) controller. SanDisk also rolls its own firmware, a combination we’ve seen in previous SanDisk SSDs (e.g. the SanDisk Ultra Plus). Rounding out the nearly vertical integration is the use of SanDisk’s 19nm eX2 ABL MLC NAND.

This is standard 2-bit-per-cell MLC NAND with a twist: a portion of each MLC NAND die is set to operate in SLC/pseudo-SLC mode. SanDisk calls this its nCache. The nCache is used as a lower latency/higher performance write buffer. In the Ultra Plus, I pointed out that there simply wasn’t much NAND allocated to the nCache since it is pulled from the ~7% spare area on the drive. With the Extreme II SanDisk doubled the amount of spare area on the drive, which could impact the size of the nCache.

| SanDisk Extreme II Specifications | |||||||||||

| 120GB | 240GB | 480GB | |||||||||

| Controller | Marvell 88SS9187 | ||||||||||

| NAND | SanDisk 19nm eX2 ABL MLC NAND | ||||||||||

| DRAM |

|

256MB DDR3-1600

|

512MB DDR3-1600

|

||||||||

| Form Factor | 2.5" 7mm | ||||||||||

| Sequential Read |

|

550MB/s

|

545MB/s

|

||||||||

| Sequential Write |

340MB/s

|

510MB/s

|

500MB/s

|

||||||||

| 4KB Random Read |

91K IOPS

|

95K IOPS

|

95K IOPS

|

||||||||

| 4KB Random Write |

74K IOPS

|

78K IOPS

|

75K IOPS

|

||||||||

| Drive Lifetime | 80TB Written | ||||||||||

| Warranty | 5 years | ||||||||||

| MSRP |

$129.99

|

$229.99

|

$439.99

|

||||||||

Some small file writes are supposed to be buffered to the nCache, but that didn’t seem to improve performance in the case of the Ultra Plus, leading me to doubt its effectiveness. However, SanDisk mentioned the nCache can be used to improve data integrity as well. The indirection/page table is stored in nCache, which SanDisk believes gives it a better chance of maintaining the integrity of that table in the event of sudden power loss (since writes to nCache are quicker than to the MLC portion of the NAND). The Extreme II itself doesn’t have any capacitor based power loss data protection.

Don't be too put off by the 80TB of drive writes rating for the drives. The larger drives should carry higher ratings (and they will last longer), but in order to claim a higher endurance SanDisk would have to actually validate to that higher endurance specification. For client drives, we often times see SSD vendors provide a single endurance rating in order to keep validation costs low - despite the fact that larger drives will be able to sustain more writes over the lifetime of the drive. SanDisk offers a 5 year warranty with the Extreme II.

Despite the controller’s capabilities (as we’ve seen with the M500), SanDisk’s Extreme II doesn’t enable any sort of AES encryption or eDrive support.

With the Extreme II, SanDisk moved to a much larger amount of DRAM per capacity point. Similar to Intel’s S3700, SanDisk now uses around 1MB of DRAM per 1GB of NAND capacity. With a flat indirection/page table structure, sufficient DRAM and an increase in spare area, it would appear that SanDisk is trying to improve IO consistency. Let’s find out if they have.

51 Comments

View All Comments

jhh - Monday, June 3, 2013 - link

I wish there were more latency measurements. The only latency measurements were during the Destroyer benchmark. Latency under a lower load would be a useful metric. We are using NFS on top of ZFS, and latency is the biggest driver of performance.jmke - Tuesday, June 4, 2013 - link

there is still a lot of headroom left; storage is still the bottleneck of any computer; even with 24 SSDs in RAID 0 you still don't get lightening speed.Try a RAM drive which allows for 7700Mb/s write speed and 1000+mb/s at 4k random write

http://www.madshrimps.be/vbulletin/f22/12-software...

put some data on there, and you can now start stressing your CPU again :)

iwod - Tuesday, June 4, 2013 - link

The biggest bottleneck is Software. And even with my SSD running in SATA 2, my Core2Duo running Windows 8 can boot just 10 seconds. And Windows 7 within 15 seconds. With my Ivy Bridge +SATA 3 SSD running 1 - 2 seconds faster on boot time.In terms of consumer usage, 99% of us will properly need much faster Seq Read Write Speed. We are near the end of Random Write improvement. Where Random Read we could do with a lot more increase.

Once we move to SATA Express with 16Gbps, we could be moving the bottleneck back to CPU. And Since we are not going to get much more IPC and Ghz improvements, we are going to need software written with Multi Core in mind to see further improvement gains. So quite possible the next generation of Chipset and CPU will be the last of this generation before we have software move to Multi Core paradigm. Which, looking at it now is going to take a long time.

glugglug - Tuesday, June 4, 2013 - link

Outside of enterprise server workloads, I don't think you will notice a difference between current SSDs.However, higher random write IOPS is an indicator of lower write amplification, so it could be a useful signal to guess at how long before the drive wears out.

vinuneuro - Tuesday, December 3, 2013 - link

For most users, a long time ago it stopped mattering. In a machine used for Word/Excel/Powerpoint, Internet, Email, Movies, I stopped being able to perceive a difference day to day after the Intel X25-M/320. I tried Samsung 470's and 830's and got rid of both for cheaper Intel 320's.whyso - Monday, June 3, 2013 - link

Honestly for the average person and most enthusiasts SSDs are plenty fast and a difference isn't noticeable unless you are benchmarking (unless the drive is flat out horrible; bute the difference between a m400 and a 840 pro are unnoticible unless you are looking for it). The most important parts of an SSD then become performance consistency (though really few people actually apply a workload where that is a problem), power use (mainly for mobile), and RELIABILITY.TrackSmart - Monday, June 3, 2013 - link

I agree 100%. I can't tell the difference between the fast SSDs from the last generation and those of the current generation in day-to-day usage. The fact that Anand had to work so hard to create a testing environment that would show significant differences between modern SSDs is very telling. Given that reality, I choose drives that are likely to be highly reliable (Crucial, Intel, Samsung) over those that have better benchmark scores.jabber - Tuesday, June 4, 2013 - link

Indeed, considering the mech HDDs the average user out there is using is lucky to push more than 50MBps with typical double figure access times.When they experience just 150MBps with single digit access times they nearly wet their pants.

MBps isn't the key for average use (as in folks that are not pushing gigabytes of data around all day) it's the access times.

SSD reviews for many are getting like graphics card reviews that go "Well this new card pushed the framerate from 205FPS to an amazing 235FPS!"

Erm great..I guess.

old-style - Monday, June 3, 2013 - link

Great review.I think Anand's penchant for on-drive encryption ignores an important aspect of firmware: it's software like everything else. Correctness trumps speed in encryption, and I would rather trust kernel hackers to encrypt my data than an OEM software team responsible for an SSD's closed-source firmware.

I'm not trying to malign OEM programmers, but encryption is notoriously difficult to get right, and I think it would be foolish to assume that an SSD's onboard encryption is as safe as the mature and widely used dm-crypt and Bitlocker implementations in Linux and Windows.

In my mind the lack of firmware encryption is a plus: the team at SanDisk either had the wisdom to avoid home-brewing an encryption routine from scratch, or they had more time to concentrate on the actual operation of the drive.

thestryker - Monday, June 3, 2013 - link

http://www.anandtech.com/show/6891/hardware-accele...I'm not sure you are aware of what he is referring to.