OCZ Announces ZD-XL PCIe SQL Accelerator SSD Solution

by Anand Lal Shimpi on July 23, 2013 8:00 AM EST- Posted in

- Storage

- IT Computing

- SSDs

- OCZ

- Enterprise SSDs

About a year and a half ago OCZ announced the acquisition of Sanrad, an enterprise storage solutions company with experience in flash caching. Today we see some of the fruits of that acquisition with the announcement of OCZ's first SQL accelerator card: the ZD-XL.

Enterprise accelerator cards are effectively very high performance SSD caching solutions. Some enterprise applications and/or databases can have extremely large footprints (think many TBs or PBs), making moving to a Flash-only server environment undesirable or impossible. The next best solution is use a NAND based SSD cache. You won't get full data coverage, but if you size the cache appropriately you might get a considerable speedup.

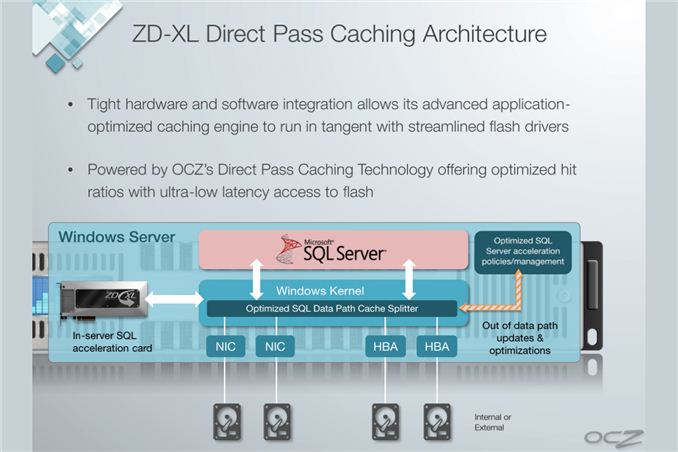

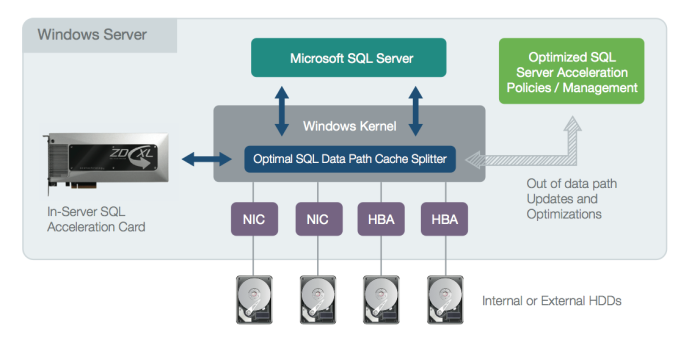

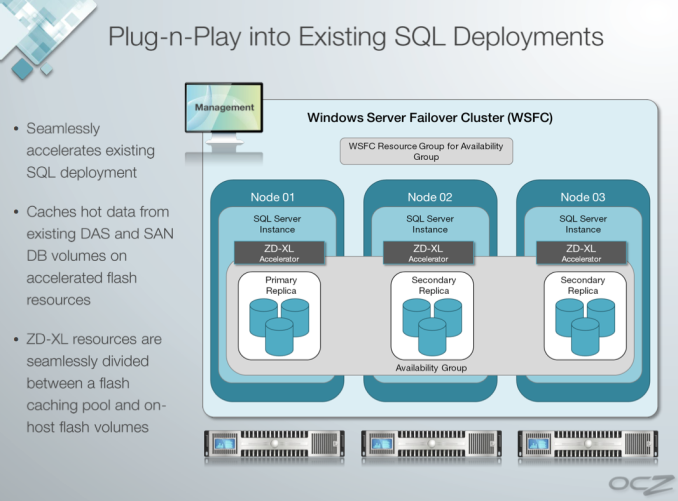

OCZ's ZD-XL is specifically targeted at servers running Microsoft SQL. Although technically the solution caches all IOs to a given volume, OCZ's ZD-XL software is specifically tuned/optimized for SQL server workloads. The ZD-XL only supports a physical Windows Server environment, OCZ offers a separate line of products for accelerating virtualized environments.

The ZD-XL SSD itself is based on OCZ's Z-Drive r4, featuring 4 or 8 SF-2000 series controllers on a single PCIe Gen 2 x8 card, depending on capacity. OCZ doesn't make the NAND or controller, but the card is built in-house. The ZD-XL implementation comes with a custom firmware and software solution, courtesy of OCZ's Sanrad team. The architecture, at least on paper, looks quite sensible.

The ZD-XL card comes with a special firmware that enables support for the ZD-XL software layer, preventing Z-Drive r4 owners from simply downloading the ZD-XL caching software and rolling their own solution. The software layer is really where the magic happens. All IOs directed at a target volume (e.g. where your SQL database is stored) are intercepted by a thin driver (cache splitter) and passed along to the target volume. An out-of-band analysis engine takes a look at the stream of IOs and determines which ones are best suited for caching. Frequently accessed data is copied over to the ZD-XL card, and whenever the cache splitter sees a request for data that's stored on the ZD-XL it's served from the very fast PCIe SSD rather than from a presumably slower array of hard drives or high latency SAN.

The analysis engine supports multiple caching policies that it can dynamically switch between, depending on workload, to avoid thrashing the cache. There's even cache policy support for deployment in a SQL AlwaysOn environment.

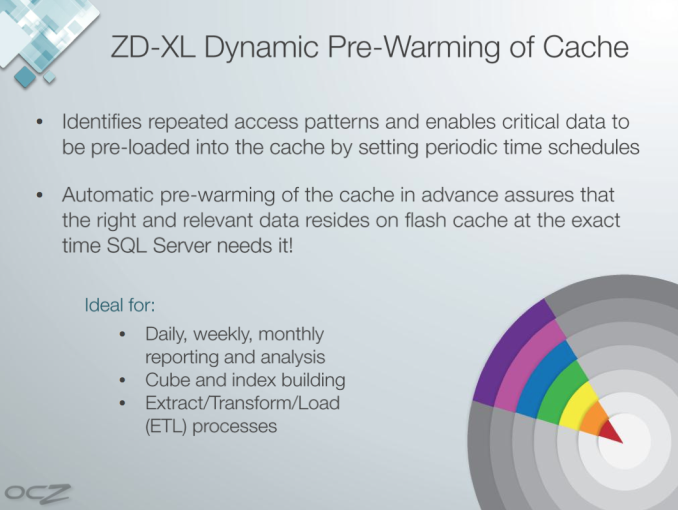

OCZ's software supports automated pre-warming of the cache. For example, if you have a large query that runs between 9AM and 11:30AM every day the ZD-XL can look at the access patterns generated during that period and ensure that as much of the accessed data is available in the cache as possible during that time period.

OCZ's ZD-XL can be partitioned into cache and native storage volumes. In other words you don't have to use the entire capacity of the card as cache, you can treat parts of it as a static volume (e.g. for your log files) and other parts of it as a cache for a much larger database.

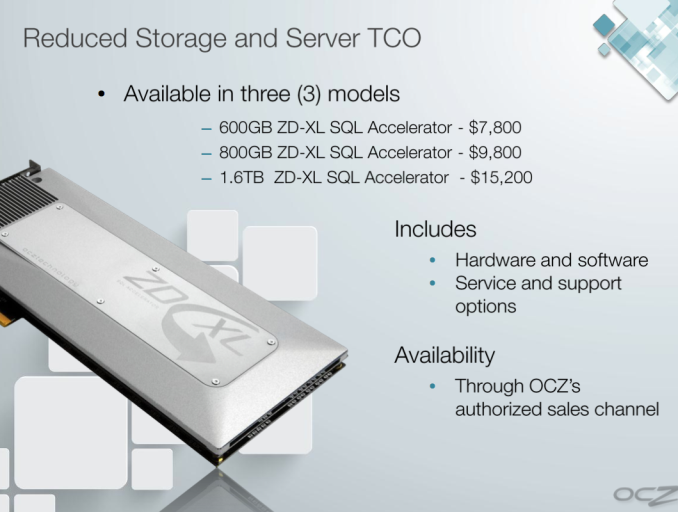

The large capacities OCZ offers with the ZD-XL (600GB, 800GB, 1.6TB) will likely help ensure good cache utilization, although there's no pre-purchase way of figuring out what the ideal cache size would be for your workload. This seems to be a problem with enterprise SSDs in general - IT administrators have to do a lot of work to determine the best SSD (or SSD caching) solution for their specific environment, there aren't many tools out there to help categorize/characterize a workload.

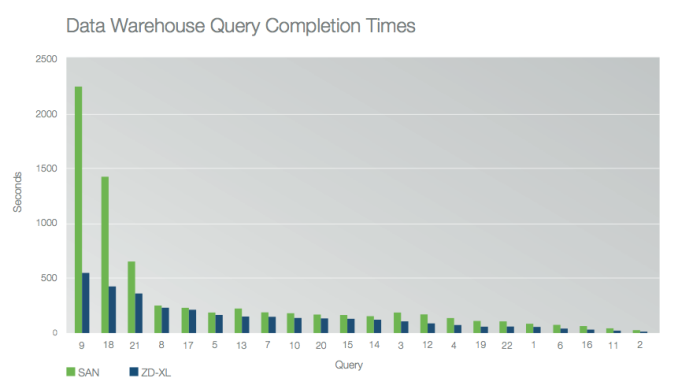

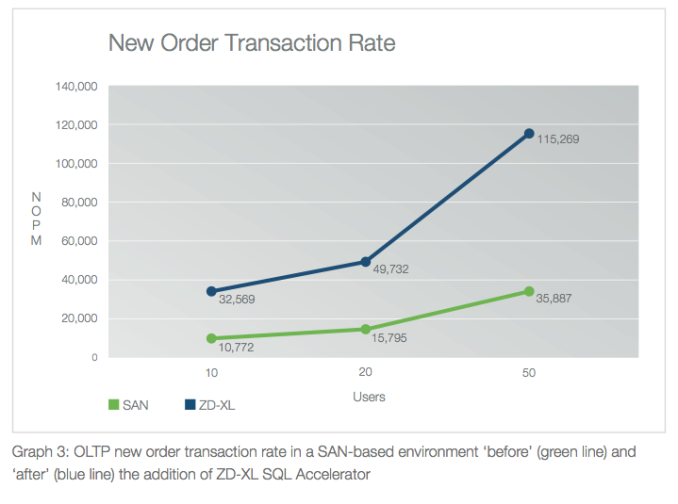

OCZ published a white paper showing performance gains for simulated workloads with and without the ZD-XL accelerator. In all of OCZ's test cases the improvements were tremendous, which is what you'd expect when introducing a giant PCIe SSD into a server that previously only had big arrays of mechanical hard drives. Queries complete quicker, servers that aren't CPU bound can support more users, etc...

Just as we've seen in the client space, SSD caching can work - the trick is ensuring you have a large enough cache to have a meaningful impact on performance. Given that the smallest ZD-XL is already 600GB, I don't think this will be an issue.

The only other requirement is that the caching software itself needs to be intelligent enough to make good use of the cache. OCZ's internal test data obviously shows the cache doing its job well in caching very long queries. Building good caching software isn't too difficult, it's just a matter of implementing well understood caching algorithms and having the benefit of a large enough cache to use.

The ZD-XL is priced at $7800, $9800 and $15,200 for 600GB, 800GB and 1.6TB capacities. The prices include software and drivers.

26 Comments

View All Comments

Sabresiberian - Wednesday, July 24, 2013 - link

One of the things I think happens is that people get marginal controllers that cause some hardware to crash. I've certainly had that happen with a faulty RAID controller eating hard drives. That being said, I would be very reluctant to use an OCZ drive in a mission-critical situation because there simply have been too many more complaints vs. other names that provide hardware that performs well and also doesn't seem to be as controller sensitive (or are just built better, whatever the case).Frankly, for mission-critical, you'd be hard pressed to get me off of an Intel solution.

That being said, I could let OCZ talk me into something for my gaming rig, being as how I keep a whole second computer for "backup" anyway, heh. However, they have very stiff competition from players that have excellent reputations. Frankly, the main pull for me to buy OCZ is that for some reason I like the company (I think that's based on an article Anandtech did awhile back, or at least my memory of that article :) , and I'd rather give my money to a "maverick on the rise" than a behemoth like Intel or Samsung.

I WANT them to succeed, but I do think they need to do something to enhance their reputation if they want to stay in the SSD business.

enealDC - Thursday, July 25, 2013 - link

Most organizations that can afford the price tag for this kind of hardware don't rely on RAID controllers inside local servers. The storage for SQL is carved off some tier of their storage infrastructure/san and presented to the server normally via Fibre Channel. That is why this kind of in-host solution doesn't make sense to me. The cloud model is normally one to many so having a device that I need to stick in one server that can only be used by .... one server breaks the virtualization model that is every growing..bill.rookard - Tuesday, July 23, 2013 - link

Seems rather pricy, and only really applies to huge systems. Can't comment on reliability, but I've never gone with an OCZ drive - I have Crucial M4's in my webserver - rock solid and fast except for the 5000 hour bug which was easy enough to fix with a re-flash - and no data loss.JlHADJOE - Tuesday, July 23, 2013 - link

An excellent idea, but like other posters have insinuated I'd never trust an OCZ product with an actual production server.romrunning - Tuesday, July 23, 2013 - link

I've said this before, and I"ll say it again: I really wish Anandtech had tests of SSDs in RAID-5 arrays. This is really useful for SMBs who may not want/afford a SAN. I'm very curious to see if the 20% spare area affects SSDs just as much when they're RAIDed together as it does standalone. I also don't care if the SSDs are branded as "enterprise" drives. It would be nice to see how a 5x256GB Samsung 840 Pro RAID-5 array would peform, or even a 5x400GB Seagate 600 Pro RAID-5 array.bobbozzo - Tuesday, July 23, 2013 - link

I'd like to see this too, preferably with ZFS RAID-Z instead of / in addition to hardware RAID5.Xenocide622 - Wednesday, July 24, 2013 - link

Actually, where I work I recently specced out a few systems with a LSI9271 8i hooked up to 4 regular 500GB Samsung 840 drives. Not the Pros. Stripe size was set to 256k, and even with a deep queue these drives just chug through data. These boxes replaced ones that had 8x 2TB drives in a raid 5, and they realistically couldn't push more than 8.8-9.5MBps of our workload (heavy random reads and writes). With the SSDs they sit at idle, and are currently limited by the double gig links on the box itself.Here's a shot of the atto disk in Server 2012. http://i.imgur.com/xU05cdC.png Rest of the box is a hex core 2ghz e5-2620, 16gb DDR3 ECC @ 1600mhz on a supermicro x9d4l-if board.

Guspaz - Thursday, July 25, 2013 - link

It may be more economical (when using ZFS) to use SSDs as an L2ARC and SLOG in front of regular spinning rust. You'd need a minimum of three drives for that (the L2ARC doesn't need to be mirrored because it doesn't matter if it fails, but the SLOG needs a mirror to avoid data loss on failure)Kraszmyl - Friday, July 26, 2013 - link

Eh it scales about as well as consumer end does. As for the life, I have four pm810s in a raid 0 on a perc5 flashed with newer lsi firmware and they are still alive and doing their thing, the pm810 is and mlc drive and the perc5 has no clue what a ssd is and bitches about them constantly not being sas drives, so it just treats them like normal drives and I have to rely on the on drive wear leveling and what not. The controller also has four 2t drives in a raid 5.Interesting note the newer version of the storage manager has an ssd caching ability which I would totally love to try however that's only supported by the newer lsi controllers and not my old ass perc5.

atomt - Tuesday, July 23, 2013 - link

Looks more or less identical in architecture and functionality as bcache in Linux, just that it has some artificial limitations wrt "firmware support" (read: some flag or name the software checks for) and perhaps a GUI of sorts.