Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

RAPID: PCIe-like Performance from a SATA SSD

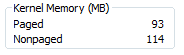

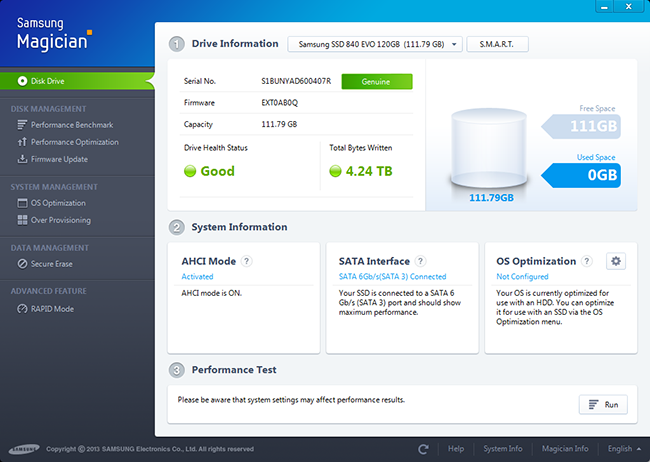

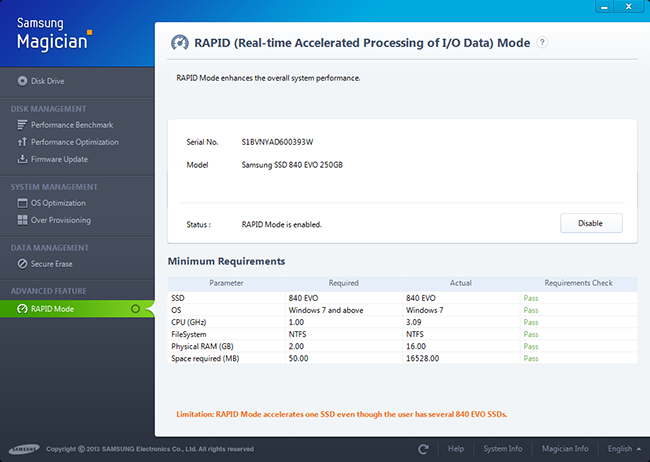

The software story around Samsung's SSD 840 EVO is quite possibly the strongest we've ever seen from an SSD manufacturer. Samsung's SSD Magician got a major update not too long ago, giving it a downright awesome UI. Magician gives you access to SMART details about your drive and provides decent visualization of things like total host writes. I'd love to see the inclusion of total NAND writes reported somewhere, as reporting host writes alone doesn't take into account write amplification and can give a false sense of security for those users deploying drives into very write intensive environments. There's a prominent drive health indicator which is tied to NAND wear and should draw a lot of attention to itself should things get bad. Samsung's SSD Magician also includes a built in benchmark, controllable overprovisioning and secure erase functionality.

Samsung sent us a beta of the next version of its Magician software (4.2) which includes support for RAPID mode (Real-time Accelerated Processing of I/O Data). RAPID is a feature exclusive to the EVO (for now) and comes courtesy of Samsung's NVELO acquisition from last year. As NVELO focused on NAND caching software, you shouldn't be too surprised by RAPID's role in improving storage performance. Unlike traditional SSD caches however that use NAND to cache mechanical storage, RAPID is designed to further improve the performance of an SSD and not make a HDD more SSD-like. RAPID uses some of your system memory and CPU resources to cache hot data, serving it out of DRAM rather than your SSD.

The architecture is rather simple to understand. Enabling RAPID installs a filter driver on your Windows machine that keeps track of all reads/writes to a single EVO (RAPID only supports caching a single drive today). The filter driver looks at both file types/sizes and LBAs, but it fundamentally caches at the block level (it simply gets hints from the filesystem to determine what to cache). File types that are meaningless to cache are automatically excluded (think very large media files), but things like Outlook PST files are prime targets for caching. Since RAPID works at the block level you can cache frequently used parts of a file, rather than having to worry about a file being too big for the cache.

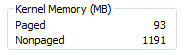

The cache resides in main memory and is allocated out of non-paged kernel memory. In fact, that's the easiest way to determine whether or not RAPID is actually working - you'll see non-paged kernel memory jump in size after about a minute of idle time on your machine:

Presently RAPID will use no more than 25% of system memory or 1GB, whichever comes first. Both reads and writes are cached, but in different ways. The read cache works as you'd expect, while RAPID more accurately does something like buffering/combining for writes. Reads are simple to cache (just look at what addresses are frequently accessed and draw those into the cache), but writes offer a different set of challenges. If you write to DRAM first and write back to the SSD you run the risk of losing a ton of data in the event of a crash or power failure. Although RAPID obeys flush commands, there's always the risk that anything pending could be lost in a system crash. Recognizing this potential, Samsung tells me that RAPID tries to instead focus on combining low queue depth writes into much larger bundles of data that can be written more like large transfers across many NAND die. To test this theory I ran our 4KB random write IOmeter test at a queue depth of 1 with RAPID enabled and disabled:

| Samsung SSD 840 EVO 250GB - 4K Random Write, QD1, 8GB LBA Space | |||||||

| IOPS | MB/s | Average Latency | Max Latency | CPU Utilization | |||

| RAPID Disabled | 22769.31 | 93.26 MB/s | 0.0435 ms | 0.7512 ms | 13.81% | ||

| RAPID Enabled | 73466.28 | 300.92 MB/s | 0.0135 ms | 31.4259 ms | 31.18% | ||

Write coalescing seems to work extremely well here. With RAPID enabled the system sees even better random write performance than it would at a queue depth of 32. Average latency drops although the max observed latency was definitely higher. I've seen max latency peaks as high as 10ms on the EVO, so the increase in max latency is a bit less severe than what the data here indicates (but it's still large).

My test system uses a quad-core Sandy Bridge, so we're looking at an additional 60 - 70% CPU load on a single core when running an unconstrained IO workload. In real world scenarios I'd expect that impact to be much lower, but there's no getting around the fact that you're spending extra cycles on doing this DRAM caching. RAPID will revert into a pass-through mode if the CPU is already tied up doing other things. The technology is really designed to make use of excess CPU and DRAM in modern day PCs.

The potential performance upside is tremendous. While the EVO is ultimately limited by the performance of 6Gbps SATA, any requests serviced out of main memory are limited by the speed of your DRAM. In practice I never saw more than 4 - 5GB/s out of the cache, but that's still an order of magnitude better than what you'd get from the SSD itself. I ran a couple of tests with and without RAPID enabled to further characterize the performance gains:

| Samsung SSD 840 EVO 250GB | |||||||

| PCMark 7 Secondary Storage Score | ATSB - Heavy 2011 Workload (Avg Data Rate) | ATSB - Heavy 2011 Workload (Avg Service Time) | ATSB - Light 2011 Workload (Avg Data Rate) | ATSB - Light 2011 Workload (Avg Service Time) | |||

| RAPID Disabled | 5414 | 229.6 MB/s | 1101.0 µs | 338.3 MB/s | 331.4 µs | ||

| RAPID Enabled | 5977 | 307.7 MB/s | 247.0 µs | 597.7 MB/s | 145.4 µs | ||

| % Increase | 10.4% | 34.0% | 75.0% | ||||

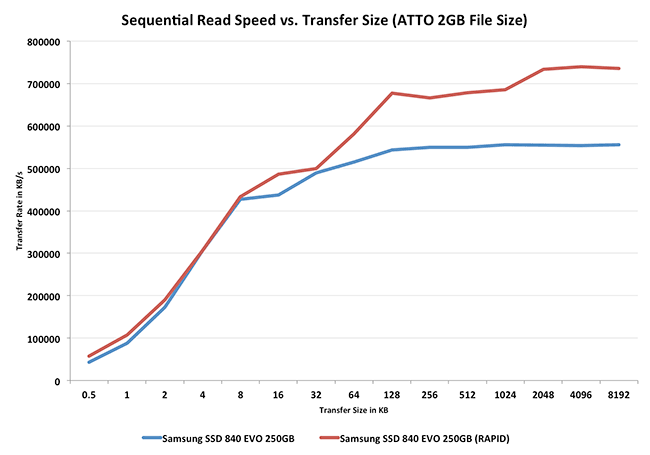

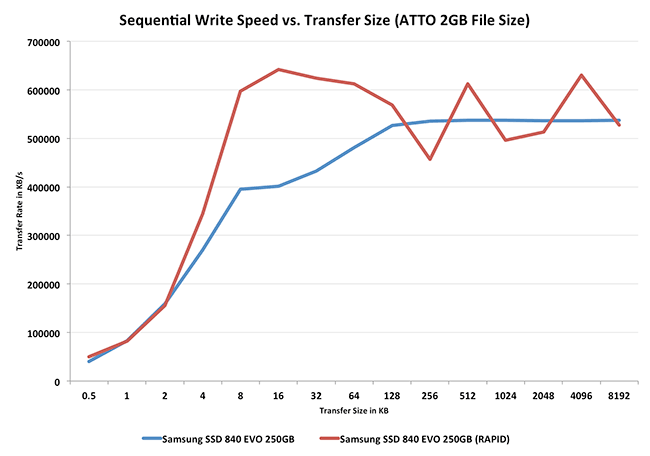

The gains in these tests range from only 10% in PCMark 7 to as much as 75% in our Light 2011 workload. I'm in the process of running a RAPID enabled drive against our Destroyer benchmark to see how it fares there. In our two storage bench tests here the impact is actually mostly on the write side, average performance actually regresses slightly in both cases. I'm not entirely sure why that is other than both of these tests were designed to be a bit more write intensive than normal in order to really stress the weaknesses on SSDs at the time. To make sure that reads could indeed be cached I ran ATTO at a couple of different test sizes, starting with our standard 2GB test:

ATTO makes for a great test because we can see the impact transfer size has on RAPID's caching algorithms. Here we see pretty much no improvement until transfers get larger than 32KB, indicating an optimization for caching large block sequential reads. Note that even though ATTO's test file is 2GB in size (and RAPID's cache is limited to 1GB) we're still able to see some increase in performance. At best RAPID boosts sequential read performance by 34%, driving the 250GB EVO beyond 700MB/s. Since the test file is larger than the maximum size of the cache we're ultimately limited by the performance of the EVO itself.

Writes show a different optimization point. Here we see big uplift above 4KB transfer sizes but more or less the same performance once we move to large block sequential transfers. Again this makes sense as Samsung would want to coalesce small writes into large blocks it can burst across many NAND die, but caching large sequential transfers is just risking potential data loss in the event of a crash/unexpected power loss. Here the potential uplift is even larger - nearly 60% over the RAPID-disabled configuration.

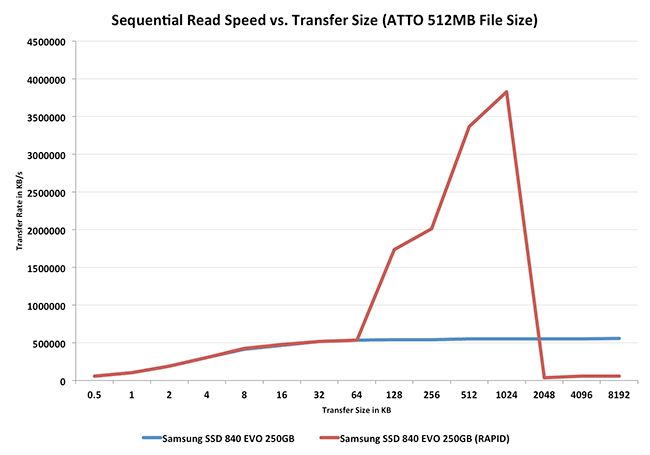

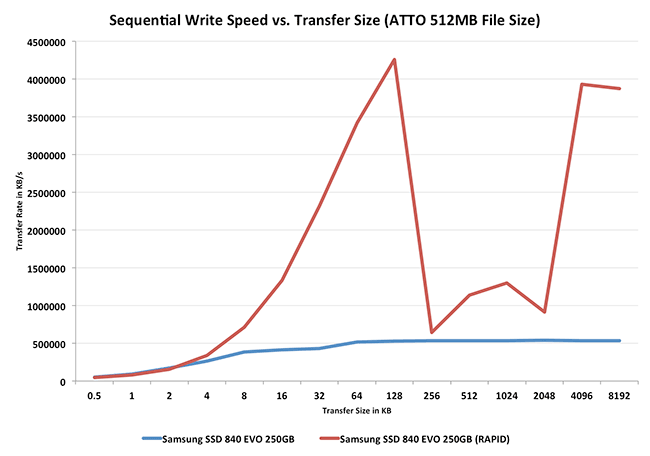

To see what would happen if the entire workload could fit within a 1GB cache I reduced the size of ATTO's test set to 512MB and re-ran the tests:

Oh man. Here performance just shoots through the roof. Max sequential read performance tops out at 3.8GB/s. Note that once again we don't RAPID attempting to cache any smaller transfers, only large sequential transfers are of interest. Towards the end of the curve performance appears to regress when the transfer size exceeds 1MB. What's actually happening is RAPID's performance is exceeding the variable ATTO uses to store its instantaneous performance results. What we're seeing here is a 32-bit integer wrapping itself.

Writes see similarly insane increases in performance. Here the best performance is north of 4GB/s. When the entire workload can fit in the cache, Samsung appears to relax some of its feelings about not caching large transfers unfortunately. The focus extends beyond just small file writes and we see nearly 4GB/s when we're transferring 8MB of data at a time. We're likely also seeing the same issue where RAPID's performance is so high that it's overflowing the 32-bit integer ATTO uses to report it.

While I appreciate the tremendous increase in both read and write performance, part of me wishes that Samsung would be more conservative in buffering writes. Although the cache map is stored on the C: drive and is persistent across boots, any crash or power loss with uncommitted (non-flushed) writes in the DRAM cache runs the risk of not making it to disk. Samsung is quick to point out that Windows issues flush commands regularly, so the risk should be as low as possible, but you're still risking more than had you not deployed another DRAM cache. If you've got a stable system connected to a UPS (or a notebook on a battery) this will sound like paranoia, but it's still a concern.

If, however, you want to get PCIe-like SSD speeds without shelling out the money for a PCIe SSD, Samsung's RAPID is the closest you'll get.

137 Comments

View All Comments

B0GiE-uk- - Thursday, July 25, 2013 - link

Seeing as this drive is similar to the 840 basic, it will be interesting to see the performance of the 840 Pro with the rapid software enabled. Has the potential to be faster than the EVO. I have heard that the rapid software will be backwards compatible.sheh - Thursday, July 25, 2013 - link

Caching speed is based on RAM, flushing speed on drive. I don't think there will be any surprises.Heavensrevenge - Thursday, July 25, 2013 - link

Finally were seeing transition to RAM caches, it's nice a RAM disk is being utilized and I hope the trend continues so that HDD/SDD can actually be taken out of the storage hierarchy for the OS & operating memory and have EVERYTHING reside in a non-volatile RAM space together for CRAZY increases in perf since HDD's in a way are a side-effect of old memory's being so small there had to be a drive backing the RAM. But of course we need traditional storage for actual storage purposes afterwards. But I'll hope for a migration of RAM towards a similarly fast combination of RAM+Drive being the main root drive built right onto the motherboards in a stick-like way within 10 years to cause a nice little computing revolution via re-architecting the classical storage hierarchy that's now, I believe, is quite possible and reasonable.DanNeely - Thursday, July 25, 2013 - link

Modern OSes have been doing ram cache for years. Samsung is able to "cheat" with rapid because they've got a much better view of what the drive is doing internally to optimize for it (even if the data isn't normally exposed via standard APIs). Eventually OS authors will catch up and have SSD optimized caches instead of HDD optimized ones and it will again be a moot point.Jaybus - Thursday, July 25, 2013 - link

Yes. It is doing the same thing as the O/S cache, but using a different algorithm to decide which blocks to cache, one that is tailored to SSD. So the O/S is very likely to adapt something similar in future.What is more interesting is TurboWrite. If you consider the on board DRAM a L1 cache, then TW implements a more-or-less L2 cache in NAND by using some of the NAND array in SLC mode instead of TLC mode. In addition to greater endurance, SLC mode allows much faster P/E cycles than TLC (or MLC). And unlike the DRAM cache, the SLC-mode NAND cache is not susceptible to power failure data loss. It still is not nearly as fast as DRAM, so the L1 DRAM cache is still needed. Encryption would kill performance without DRAM. But because data can be moved from DRAM cache to SLC cache more quickly, it frees up DRAM at a faster rate and increases throughput. So unless writing an awfully lot of data continuously, you essentially get SLC performance from a TLC drive. That is the EVO (lutionary) thing about this drive, much more so than RAPID software.

Heavensrevenge - Thursday, July 25, 2013 - link

Heh yes of course, I mean removing the "hard drive/solid state drive" out of the storage hierarchy completely and putting all OS and cache data into non-volatile silicon where the ram sits today, making all operations go as fast as ramdisk speed, not just have it there as a way to hide latency. like boot from the modules plugged directly into the motherboard and everything :) THATS what I'd love to see, 1-2GB/s 4K read & write speeds all-around not just for special use cases, All because the fab process is becoming small enough o fit the amount of data there we can actually re id f that part of the storage hierarchy if you know what I mean.Spunjji - Friday, July 26, 2013 - link

I think there's always going to be a space for slower, more density-efficient storage in any sensible storage hierarchy. I think what you're looking forwards to is MRAM / PRAM, though. :)Heavensrevenge - Saturday, July 27, 2013 - link

MRAM or any NVRAM is basically the concept I was wanting :) Thank you for the reference!!The day/year/decade that type of memory become our RAM & OS/Boot drive replacement in the storage hierarchy will be the one of the best times in modern computing history.

Honestly all HDD/SDD manufactures should stop wasting their R&D on this type of crap even though SSD's are a wonderful "now" solution to the problem and I'll still recommend them for the time being.

The sooner that type of memory is our primary 1st level storage directly addressable from the CPU the better our modern world of computing will become and begin evolving again.

MrSpadge - Saturday, July 27, 2013 - link

I don't think Samsung is doing anything better here, or working some SSD-magic. They're just being much more agressive with caching than Win dares to be.Touche - Thursday, July 25, 2013 - link

I don't think your tests are representative of most people's usage, especially for these drives. TurboWrite should prove to be a much better asset for most, so the drive's performance is actually quite better than this review indicates.