LG G2 and MSM8974 Snapdragon 800 - Mini Review

by Brian Klug on September 7, 2013 1:11 AM EST- Posted in

- Smartphones

- LG

- Mobile

- LG G2

- Android 4.2

- MSM8974

- Snapdragon 800

Battery Life

One of the things Qualcomm promised would come with Snapdragon 800 (8974) (and by extension the process improvement with 28nm HPM) was lower power consumption, especially versus Snapdragon 600 (8064). There are improvements throughout the overall Snapdragon 800 platform which help as well, newer PMIC (PM8941) and that newer modem block onboard as well, but overall platform power goes down in the lower performance states for Snapdragon 800. In addition the G2 has a few unique power saving features of its own, including display GRAM (Graphics RAM) which enables the equivalent of panel self refresh for the display. When the display is static, the G2 can run parts of the display subsystem and AP off and save power, which they purport increases the mixed use battery life case by 10 percent overall, and 26 percent compared to the actively refreshing display equivalent. In addition the G2 has a fairly sizable 3000 mAh 3.8V (11.4 watt-hour) battery which is stacked to get the most out of the rounded shape of the device, and utilizes LG's new SiO+ anode for increased energy density compared to the conventional graphite anode.

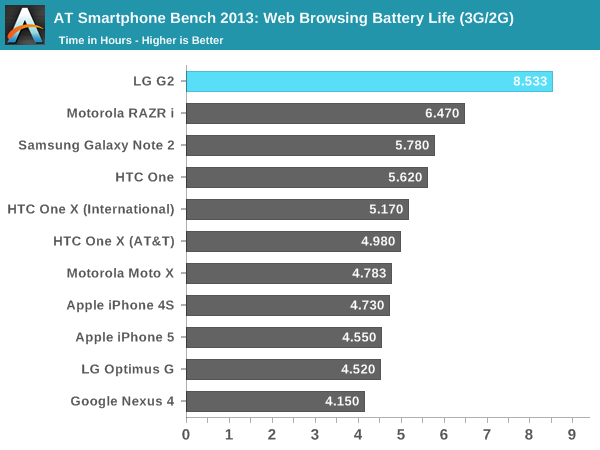

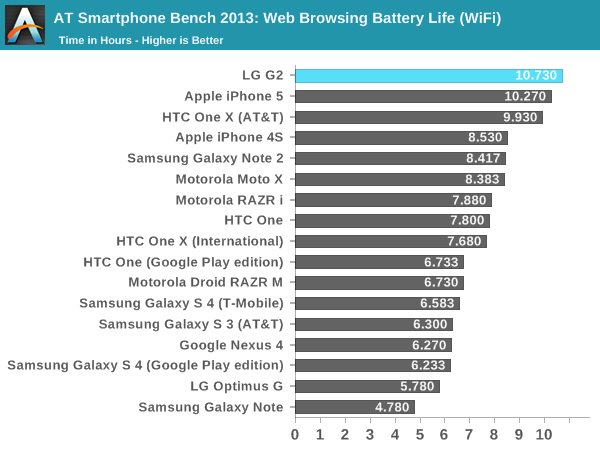

Our battery life test is unchanged, we calibrate the display to exactly 200 nits and run it through a controlled workload consisting of a dozen or so popular pages and articles with pauses in between until the device dies. This is repeated on cellular and WiFi, in this case since we have an international model of the G2 that lacks the LTE bands used in the USA, that's 3G WCDMA on AT&T's Band 2 network. I've tested 3G battery life on devices concurrently for a while now in addition to LTE though, so we still have some meaningful comparisons. The most interesting comparisons are to the Optimus G (APQ8064) and HTC One (APQ8064T) previous generation.

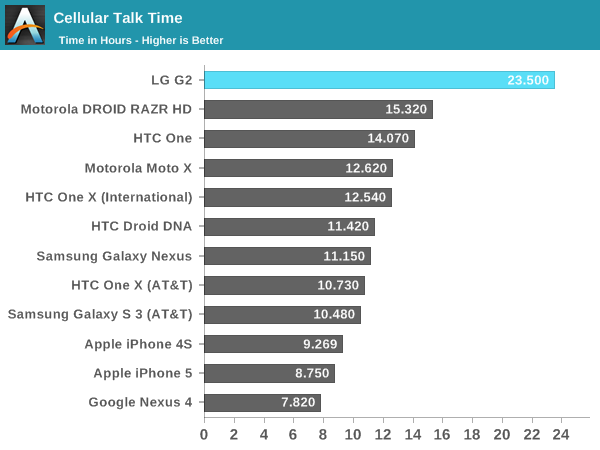

The LG G2 battery life is shockingly good through our tests, and in subjective use. The combination of larger battery, GRAM for panel self refresh, new HK-MG process, and changes to the architecture dramatically improve things for the G2 over the Optimus G. While running the two web browsing tests I suspected that the G2 might be my first phone call test to break 24 hours, while it doesn't break it it comes tantalizingly close at 23.5 hours. I'm very impressed with the G2 battery life.

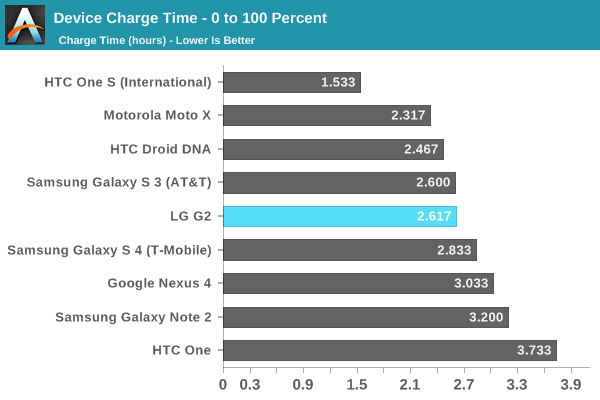

The G2 also charges very fast for its battery size. I've been profiling charging behavior and current for devices for a while now, since I strongly believe that battery life and charging speed are complementary problems. You should always opportunistically charge your smartphone, being able to draw as much while you have access to a power outlet is critical. The G2 can negotiate a 2A charge rate on my downstream charge port controller and charges very fast in that mode. Of course the PM8941 PMIC also includes some new features that Qualcomm has given QuickCharge 2.0 branding.

120 Comments

View All Comments

Krysto - Sunday, September 8, 2013 - link

Cortex A9 was great efficiency wise, and better perf/Watt than what Qualcomm had available at the time (S3 Scorpion), but Nvidia still blew it with Tegra 3. So no, that's not the only reason. Nvidia can do certain things like moving to smaller node or keeping the clock speed low of the GPU's, but adding more GPU cores, and so on, to increase efficiency and performance/Watt. But they aren't doing any of that.UpSpin - Sunday, September 8, 2013 - link

You mean they could and should have released more iterations of Tegra 3 and adding more and more GPUs to improve at least the graphics performance than waiting for A15 and Tegra 4.I never designed a SoC myself :-D so I don't know how hard it is but I did lots of PCB which is practically the same except on a much larger scale :-D If you add some parts you have to increase the die size, thus move other parts on the die around, reroute the stuff etc. So it's still a lot of work. The main bottleneck of Tegra 3 is memory bandwidth. So adding more GPU cores without adressing the memory bandwidth would not have made any sense most probably.

They probably expected to ship Tegra 4 SoCs sooner, thus they saw no need in releasing a totally improved Tegra 3 and focused on Tegra 4.

And if you compare Tegra 4 to Tegra 3, then they did exactly what you wanted, moving to a smaller node, increasing the number of GPU cores, moving to A15 while maintaining the power efficient companion core, increasing bandwidth, ...

ESC2000 - Sunday, September 8, 2013 - link

I wonder whether it is more expensive to pay to license ARM's A9, A15, etc (thought they were doing an A12 as well?) or to develop it yourself like Qualcomm does. Obviously QCOM isn't starting from scratch every time, but R&D adds up fast.This isn't a perfect analogy at all but it makes me think of the difference between being a pharmaceutical company that develops your own products and one that makes generic versions of products someone else has already developed once the patent expires. Of course now in the US many companies that technically make their own products from scratch really just take a compound already invented and tweak it a little bit (isolate the one useful isomer, make the chiral version, etc), knowing that it is likely their modified version will be safe and effective just as the existing drug hopefully is. They still get their patent, which they can extend through various manipulations like testing in new populations right before the patent expires, but the R&D costs are much lower. Consumers therefore get many similar versions of drugs that rely on one mechanism of action (see all the SSRIs) and few other choices if that mechanism does not work for them. Not sure how I got off into that but it is something I care about and now maybe some Anandtech readers will know haha.

krumme - Sunday, September 8, 2013 - link

Great story mate :), i like it.balraj - Saturday, September 7, 2013 - link

My first comment on AnandtechThe review was cool...I'm impressed by g2 battery life n camera...

Wish Anandtech can have a UI section

Also can you ppl confirm if lg will support g2 with Atleast 2 yrs of software update

That's gonna be deciding factor in choosing between g2 or nexus 5 for most of us !!!!!!!

Impulses - Saturday, September 7, 2013 - link

Absolutely nobody can guarantee that, even if an LG exec came out and said so there's no guarantee they wouldn't change their mind or a carrier wouldn't delay/block an update... If updates are that important to you, then get a Nexus, end of story.adityasingh - Saturday, September 7, 2013 - link

@Brian could you verify whether the LG G2 uses Snapdragon 800 MSM8974 or MSM8974AB?The "AB" version clocks the CPU at 2.3Ghz, while the standard version tops out at 2.2Ghz.. However you noted in your review that the GPU is clocked at 450Mhz.. If I recall correctly, the "AB" version runs the GPU at 550Mhz.. while the standard is 450Mhz

So in this case the CPU points to one bin.. but the GPU points to another.. Can you please confirm?

Nice "Mini Review" otherwise.. Am looking forward to the full review soon.. Please include the throttling analysis like the one from the MotoX. It would be nice to see how the long the clocks stay at 2.3Ghz :)

Krysto - Sunday, September 8, 2013 - link

He did mention it's the first. no the latter.neoraiden - Saturday, September 7, 2013 - link

Brian could you comment on how the lumia 1020 compares to a cheap ($150-200) camera as I was impressed by the difference in colour for the video comparison even if ois wasn't the best.I currently have a note 2 but the camera quality in low light conditions is just too bad, also the inability to move apps to my memory card has been annoying. I have an upgrade coming up in January I think, but I might try to change phone before. I was wondering whether you could comment on whether the lumia 1020 is worth the jump from android due to picture quality or will an htc one or nexus 5 (if similar to the g2) suffice? I was considering the note 3 as I like everything else but it still doesn't have ois or would the note 3 with a cheap compact be better even given the inconvenience of having to bring a camera?

The main day to day use of my phone is news apps, Internet, email some threaded (which I hear is a problem for windows phone).

abrahavt - Sunday, September 8, 2013 - link

I would wait to see what camera nexus 5 would have. Alternative is to get the Sony QX 100 and you would get great pictures irrespective of the phone