The Best Server CPUs Compared, Part 1

by Johan De Gelas on December 22, 2008 10:00 PM EST- Posted in

- IT Computing

The past several months have seen both Intel and AMD introducing interesting updates to their CPU lines. Intel started with the E-stepping of the Xeon. Even at 3GHz, the four cores of the Xeon 5450 need 80W at the most, and if speed is all you care about a 120W 5470 is available at 3.33GHz. The big news came of course from AMD. The "only native x86 quad-core" is finally shining bright thanks to a very successful transition to 45nm immersion lithography as you can read here. The result is a faster and larger 6MB L3 cache, higher clock speeds, and lower memory latency. AMD's quad-core is finally ready to be a Xeon killer.

So it was time for a new server CPU shoot out as server buyers are confronted with quickly growing server CPU pricelists. Talking about pricelists, is someone at marketing taking revenge on a strict math teacher that made him/her suffer a few years ago? How else can you explain that the Xeon 5470 is faster than the 5472, and that the Xeon 5472 and 5450 are running at the same clock speed? The deranged Intel (and in a lesser degree AMD) numbering system now forces you to read through spec sheets the size of a phone book just to get an idea of what you are getting. Or you could use a full-blown search engine to understand what exactly you can or will buy. The marketing departments are happy though: besides the technical white papers you need to read to build a server, reading white papers to simply buy a CPU is now necessary too. Market segmentation and creative numbering…a slightly insane combination.

Anyway, if you are an investor trying to understand how the different offerings compare, or you are out to buy a new server and are asking yourself what CPU should be in there, this article will help guide you through the newest offerings of Intel and AMD. In addition, as the Xeon 55xx - based on the Nehalem architecture - is not far off, we will also try it to see what this CPU will bring to the table. This article is different from the previous ones, as we have changed the collection of benchmarks we use to evaluate server CPUs. Read on, and find out why we feel this is a better and more realistic approach.

Breaking out of the benchmark prison

When I first started working on this article, I immediately started to run several of our "standard" benchmarks: CINEBENCH, Fritz Chess, etc. As I started to think about our "normal" benchmark suite, I quickly realized that this article would become imprisoned by its own benchmarks. It is nice to have a mix of exotic and easy to run benchmarks, but is it wise to make an article with analysis around such an approach? How well does this reflect the real world? If you are actually buying a server or are you are trying to understand how competitive AMD products are with Intel's, such a benchmark mix is probably only confusing the people who like to understand what decisions to make. For example, it is very tempting to run a lot of rendering and rarely used benchmarks as they are either easy to run or easy to find, but it gives a completely distorted view on how the different products compare. Of course, running more benchmarks is always better, but if we want to give you a good insight in how these server CPUs compare, there are two ways to do it: the "micro architecture" approach and the "buyer's market" approach.

With the micro architecture approach, you try to understand how well a CPU deals with branch/SSE/Integer/Floating Point/Memory intensive code. Once you have analyzed this, you can deduce how a particular piece of software will probably behave. It is the approach we have taken in AMD's 3rd generation Opteron versus Intel's 45nm Xeon: a closer look. It is a lot of fun to write these types of articles, but it only allows those who have profiled their code to understand how well the CPU will do with their own code.

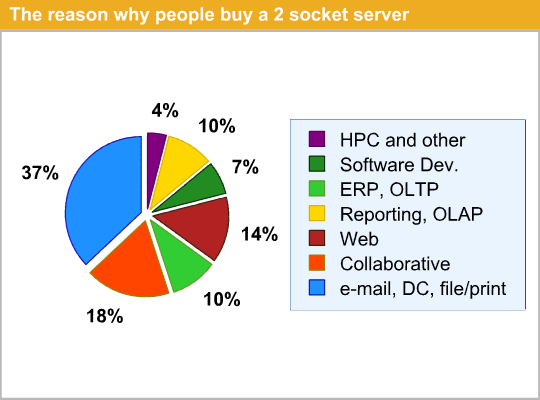

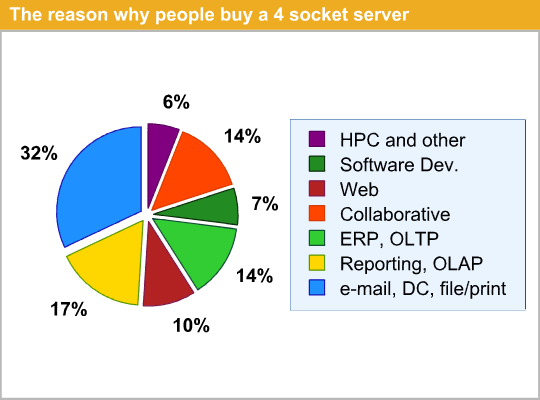

The second approach is the "buyer's market" approach. Before we dive into new Xeons and Opterons, we should ask ourselves "why are people buying these server CPUs"? Luckily, IDC reports[1] answer these questions. Even though you have to take the results below with a grain of salt, they give us a rough idea of what these CPUs are used for.

IT infrastructure servers like firewalls, domain controllers, and e-mail/file/print servers are the most common reasons why servers are bought. However, file and print servers, domain controllers, and firewalls are rarely limited by CPU power. So we have the luxury of ignoring them: the CPU decision is a lot less important in these kind of servers. The same is true for software development servers: most of them are for testing purposes and are underutilized. Mail servers (probably 10% out of the 32-37%) are more interesting, but currently we have no really good benchmark comparisons available, since Microsoft's Exchange benchmark was unfortunately retired. We are currently investigating which e-mail benchmark should be added to our benchmarking suite. However, it seems that most mail server benchmarking boils down to storage testing. This subject is to be continued, and suggestions are welcome.

Collaborative servers really deserve more attention too as they comprise 14 to 18% of the market. We hope to show you some benchmarks on them later. Developing new server benchmarks takes time unfortunately.

ERP and heavy OLTP databases are good for up to 17% of the shipments and this market is even more important if you look at the revenue. That is why we discuss the SAP benchmarks published elsewhere, even though they are not run by us. We'll add Oracle Swingbench in this article to make sure this category of software is well represented. You can also check Jason's and Ross' AMD Shanghai review for detailed MS SQL Server benchmarking. With Oracle, MS SQL Server and SAP, which together dominate this part of the server market, we have covered this part well.

Reporting and OLAP databases, also called decision support databases, will be represented by our MySQL benchmark. Last but not least, we'll add the MCS eFMS web server test -- an ultra real world test -- to our benchmark suite to make sure the "heavy web" applications are covered too. It is not perfect, but this way we cover the actual market a lot better than before.

Secondly, we have to look at virtualization. According to IDC reports, 35% of the servers bought in 2007 were bought to be virtualized. IDC expect this number to climb up to 52% in 2008 [2]. Unfortunately, as soon as we upgraded the BIOS of our quad socket platform to support the latest Opteron, it would not allow us to install ESX nor let us enable power management. That is why we had to postpone our server review for a few weeks and that is why we split it into two parts. For now, we will look at the VMmark submissions to get an idea how the CPUs compare when it comes to virtualization.

In a nutshell, we're moving towards a new way of comparing server CPUs. We combine the more reliable industry standard benchmarks (SAP, VMmark) with our own benchmarks and try to give you a benchmark mix that comes closer to what the servers are actually bought for. That should allow you to get an overview that is as fair as possible. Performance/watt is still missing in this first part, but a first look is already available in the Shanghai review.

29 Comments

View All Comments

Theunis - Wednesday, December 31, 2008 - link

I'm not entirely happy with the results, but I would also like to know the -march that was used to compile the 64bit Linux kernel and MySQL, and any other Linux benchmarks, because optimization for specific -march is crucial to compare apples with apples. Ok yes AMD wins by assuming standard/generic compile flags was used. But what if both were to use optimized architecture compile flags? Then if AMD lose they could blame gcc. But it still should be interesting to see the outcome of open source support. And how it wraps around Vendor support to open source and also the distribution that decided to go so far to support architecture specific (Gentoo Linux Distribution comes to mind).Intel knew that they were lagging behind because of their arch was too specific and wasn't running as efficiently on generic compiled code. Core i7 was suppose to fix this, But what if this is still not the case and optimization is required for architecture is still necessary to see any improvements on Intel's side?

James5mith - Tuesday, December 30, 2008 - link

Just wanted to mention that I love that case. The Supermicro 846 series is what I'm looking towards to do some serious disk density for a new storage server. I'm just wondering how much the SAS backplane affects access latencies, etc. (If you are using the one with the LSI expander chip.)Krobaruk - Saturday, December 27, 2008 - link

Just wanted to add a few bits of info. Back to an earlier comment, it is definitely incorrect to call SAS and infiniband the same, the cables are infact slightly different composition (Differences in shieliding) although they are terminated the same. Lets not forget that 10G ethernet uses the same termination in some implementations too.Also at least under Xen AMD platforms still do not offer PCI pass through, this is a fairly big inconvenience and should probably be mentioned as support is not expected until the HT3 Shanghais release later this year. Paricularly interesting are result here that show despite NUMA greatly reducing load on the HTLinks, it makes very little difference to the VMMark result:

http://blogs.vmware.com/performance/2007/02/studyi...">http://blogs.vmware.com/performance/2007/02/studyi...

I would imagine HT3 Opteron will only really benefit 8way opterons in typical figure of 8 config as the bus is that much heavier loaded.

Its odd your Supermicro quad had problems with the Shanghais and certain apps, no problems from testing with a Tyan TN68 here. Was the bios beta?

Are any benches with Xen likely to happen in future or is Anandtech solidly VMWare?

Answering another users questions about mailserver and spam assasin, I do not know of any decent bechmarks for this but have seen the load that different mailfilter servers can handle under the MailWatch/Mailgate system. Fast SAS raid 1 and dual quad cores seems to give best value and ram requirement is about 1Gb per core. Would be interesting to see some sort of linux mail filter benchmarks if you can construct something to do this.

RagingDragon - Saturday, December 27, 2008 - link

I'd imagine alot of software development servers are used for testing of applications which fall into one of the other categories. There'd also be bug tracking and version control servers, but most interesting from a performance perspective might be build servers (i.e. servers used for compiling software) - the best benchmark for that would probably be compile times for various compilers (i.e. Gnu, Intel and MS C/C++ compilers; Sun, Oracle and IBM java compilers; etc.)bobbozzo - Friday, December 26, 2008 - link

IMO, many (most?) mailservers need more than fast I/O; what with so much anti-spam and anti-virus filtering going on nowadays.SpamAssassin, although wonderful, can be slow under heavy loads and with many features enabled, and the same goes for A/Vs such as clamscan.

That said, I don't know of any good benchmarks.

vtechk - Thursday, December 25, 2008 - link

Very nice article, just the Fluent benchmarks are far too simple to give relevant information. Standard Fluent job these days has 25+ million elements, so sedan_4m is more of a synthetic test than a real world one. It would also be very interesting to see Nastran, Abaqus and PamCrash numbers.Amiga500 - Sunday, December 28, 2008 - link

Just to reinforce Alpha's comments... 25 million being standard isn't even close to being true!!!Sure, if you want to perform a full global simulation, you'll need that number (and more) for something like a car (you can add another 0 to the 25 million for F1 teams!), or an aircraft.

But, mostly, the problems are broken down into much smaller numbers, under 10 million cells. A rule of thumb with fluent is 700K cells for every GB of RAM... so working on that principal, you'd need a 16GB workstation for 10 million cells...

Anything more, and you'll need a full cluster to make the turnaround times practical. :-)

alpha754293 - Saturday, December 27, 2008 - link

"Standard Fluent job these days has 25+ million elements"That's NOT entirely true. The size of the simulation is dependent on what you are simulating. (And also hardware availability/limitations).

Besides, I don't think that Johan actually ran the benchmarks himself. He just dug up the results database and mentioned it here (wherever the processor specs were relevant).

He also makes a note that the benchmark itself can be quite costly (e.g. a license of Fluent can easily be $20k+), and there needs to be a degree of understanding to be able to run the benchmark itself (which he also stated that neither he, nor the lab has.)

And on that note - Johan, if you need help in interpreting the HPC results, feel free to get in touch with me. I sent you an email on this topic (I'm actually running the LS-DYNA 3-car collision benchmark right now as we speak on my systems). I couldn't find the case data to be able to run the Fluent benchmarks as well, but IF I do; I'll run it and I'll let you know.

Otherwise, EXCELLENT. Looking forward to lots more coming from you once the NDA is lifted on the Nehalem Xeons.

zpdixon42 - Wednesday, December 24, 2008 - link

Johan,The article makes a point of explaining how fair the Opteron Killer? section is, by assuming that unbuffered DDR3-1066 will provide results close enough to registered DDR3-1333 for Nehalem. But what is nowhere mentioned is that all of the benchmarks unfairly penalize the 45nm Opteron because registered DDR2-800 was used whereas faster DDR2-1067 is supported by Shanghai. If you go into great length justifying memory specs for Intel, IMHO you should mention that point for AMD as well.

The Oracle Charbench graph shows "Xeon 5430 3.33GHz". This is wrong, it's the X5470 that runs at 3.33GHz, the E5430 runs at 2.66GHz.

The 3DSMax 2008 32 bit graph should show the Quad Opteron 8356 bar in green color, not blue.

In the 3DSMax 2008 32 bit benchmark, some results are clearly abnormal. For example a Quad Xeon X7460 2.66GHz is beaten by an older microarchitecture running at a slower speed (Quad Xeon 7330 2.4GHz). Why is that ?

The article mentions in 2 places the Opteron "8484", this should be "8384".

The Opteron Killer? section says "the boost from Hyper-Threading ranges from nothing to about 12%". It should rather say "ranges from -5% to 12%" (ie. HT degrades performance in some cases).

There is a typo in the same section: "...a small advantage at* it can use..." s/at/as/.

Also, I think CPU benchmarking articles should draw graphs to represent performance/dollar or performance/watt (instead of absolute performance), since that's what matters in the end.

JohanAnandtech - Wednesday, December 24, 2008 - link

"But what is nowhere mentioned is that all of the benchmarks unfairly penalize the 45nm Opteron because registered DDR2-800 was used whereas faster DDR2-1067 is supported by Shanghai. "Considering that Shanghai has just made DDR-2 800 (buffered) possible, I think it is highly unlikely that we'll see buffered DDR-2 1066 very soon. Is it possible that you are thinking of Deneb which can use DDR-2 1066 unbuffered?

"In the 3DSMax 2008 32 bit benchmark, some results are clearly abnormal. For example a Quad Xeon X7460 2.66GHz is beaten by an older microarchitecture running at a slower speed (Quad Xeon 7330 2.4GHz). Why is that ? "

Because 3DS Max does not like 24 cores. See here:

http://it.anandtech.com/cpuchipsets/intel/showdoc....">http://it.anandtech.com/cpuchipsets/intel/showdoc....

"Also, I think CPU benchmarking articles should draw graphs to represent performance/dollar or performance/watt (instead of absolute performance), since that's what matters in the end. "

In most cases performance/dollar is a confusing metric for server CPUs, as it greatly depends on what application you will be running. For example, if you are spending 2/3 of your money on a storage system for your OLTP app, the server CPU price is less important. It is better to compare to similar servers.

Performance/Watt was impossible as our Quad Socket board had a beta BIOS which disabled powernow! That would not have been fair.

I'll check out the typos you have discovered and fix them. Thx.