Intel and Micron Develop Hybrid Memory Cube, Stacked DRAM is Coming

by Anand Lal Shimpi on September 15, 2011 1:10 PM ESTDuring the final keynote of IDF, Intel's Justin Rattner demonstrated a new stacked DRAM technology called the Hybrid Memory Cube (HMC). The need is clear: if CPU performance is to continue to scale, there can't be any bottlenecks preventing that scaling from happening. Memory bandwidth has always been a bottleneck we've been worried about as an industry. Ten years ago the worry was that parallel DRAM interfaces wouldn't be able to cut it. Thankfully through tons of innovation we're able to put down 128-bit wide DRAM paths on mainstream motherboards and use some very high speed memories attached to it. What many thought couldn't be done became commonplace and affordable. The question is where do we go from there? DRAM frequencies won't scale forever and continually widening buses isn't exactly feasible.

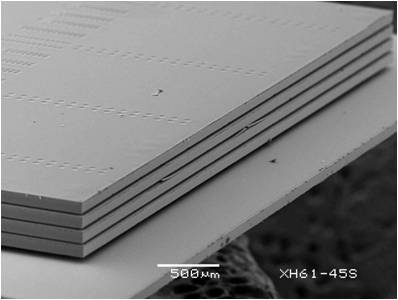

Intel and Micron came up with an idea. Take a DRAM stack and mate it with a logic process (think CPU process, not DRAM fabs) layer for buffering and routing and you can deliver a very high bandwidth, low power DRAM. The buffer layer is actually key here because it helps solve the problem of routing pins to multiple DRAM die. By using a more advanced logic process it's likely that the problem of routing all of that data is made easier. It's this stacked DRAM + logic that's called the Hybrid Memory Cube.

The prototype these two companies developed is good for data rates of up to 1 terabit per second of bandwidth. Intel claims that the technology can deliver bandwidth at 7x the power efficiency of the most efficient DDR3 available today.

The big concern here is obviously manufacturing and by extension, cost. But as with all technologies in this industry, if there's a need, they'll find a way.

19 Comments

View All Comments

prophet001 - Thursday, September 15, 2011 - link

that is really neat. I hope to see this become an affordable solution for home computingHungryTurkey - Monday, January 26, 2015 - link

It will... in 12 years. Everything they're researching today, becomes reality tomorrow.douglaswilliams - Thursday, September 15, 2011 - link

Anand,So, in the future we could not only have a choice from Intel of which processor line, how fast and how much cache, but also how much RAM is build into it?

Doug

douglaswilliams - Thursday, September 15, 2011 - link

Even after re-reading the story I'm still confused as to what is being talked about.Is this a CPU with on-package RAM? Similar to mobile SoCs?

Or is it just traditional RAM stacked on top of each other with some logic in-between to sort out the layers?

Doug

WeaselITB - Thursday, September 15, 2011 - link

Both and neither.From what I've read, it's designed as a replacement for traditional DIMM sticks of RAM. Instead of buying 4GB DDR3 stick from Corsair, you'd buy a 4GB HMC from Micron. The innovation here is they're using new ways of addressing the individual memory bits (with a logic processor) in order to speed up access across the entire section of memory.

As awesome as this sounds, I don't forsee much market traction unless Intel/Micron can get a standards body like JEDEC behind it, especially with the just-announced details of DDR4.

Further details:

http://www.micron.com/innovations/hmc.html

http://blogs.intel.com/research/2011/09/hmc.php

http://www.jedec.org/news/pressreleases/jedec-anno...

-Weasel

name99 - Thursday, September 15, 2011 - link

Current DRAM burns a huge amount of the power in laptops and phones.You may think this has no traction. I think Apple will be all over it.

And once again, let's predict how this will play out.

The peanut gallery will complain that Apple is shipping devices that have no customizability --- which may be true. The opportunity cost of having traditional RAM slots is a huge cost in power because of having drive power across the noisy interface between the slot and the DIMM. The tighter the RAM can be integrated with the CPU, the lower the power --- at the cost of having to decide how much RAM you want when you buy the device, with no opportunity to later change your mind.

We've seen over and over again as one part or another of the traditional PC becomes non-replacable, in order to get (always) lower power and (sometimes) a smaller footprint --- non-replacable CPUs, then batteries, now SSDs. Memory is simply the next on the list.

But, of course, the peanut gallery has no concept of the idea of tradeoffs, and refuses to accept that certain laws of physics exist. And so we will hear another round of choruses about how Apple is doing this in their next machines (first laptops, then mini and iMacs) because they want to screw their users over and charge them higher prices, and because Apple hates freedom. And then, when the PC manufacturers follow two years later, a deafening silence.

Meanwhile, whiners, how about that IE 10 and no Flash huh? Could it possibly be that Apple were driven by something more than an insane need to control every aspect of their user's lives?

piiman - Friday, September 16, 2011 - link

"Meanwhile, whiners, how about that IE 10 and no Flash huh? Could it possibly be that Apple were driven by something more than an insane need to control every aspect of their user's lives? "what about it? and no

Since when has Apple been the first to use new tech? They still use old crap in their new products and price it like its new tech. But thanks for showing your fanboyism. In case you missing the article the is about RAM not the magic of Apple.

minijedimaster - Friday, September 16, 2011 - link

How do you take this article and make it about Apple? Seriously? Pretty cool tech though.HungryTurkey - Monday, January 26, 2015 - link

I think you still have a little apple on your chin, sir. They are the farthest thing from innovators in any market segment right now. Without Jobs, they are lost. Anyone who rode that roller coaster up, might want to bail before it rolls back down. Slight revisions on existing product != innovation. iPOD was innovation, iPhone was innovation, iPad was innovation when the apps came (Everyone derided it as a big iPhone without the Phone at launch). What's next for them? More in the same markets they created. Without the visionary to push insane engineers into trying out crazy ideas, they just founder and will sink. And this memory will find a home in all devices in another 10 or 12 years. Not just apple will want memory with dramatically improved efficiency.menting - Thursday, September 15, 2011 - link

Weasel,Micron is trying to get another large DRAM manufacturer on board so they can go to JEDEC and try to make it a standard.

Currently, it's not intended for mass market, but for specialized servers only.