Calxeda's ARM server tested

by Johan De Gelas on March 12, 2013 7:14 PM EST- Posted in

- IT Computing

- Arm

- Xeon

- Boston

- Calxeda

- server

- Enterprise CPUs

It's a Cluster, Not a Server

When unpacking our Boston Viridis server, the first thing that stood out is the bright red front panel. That is Boston's way of telling us that we have the "Cloud Appliance" edition. The model with an orange bezel is intended to serve as a NAS appliance, purple stands for "web farm", and blue is more suited for a Hadoop cluster. Another observation is that the chassis looks similar to recent SuperMicro servers; it is indeed a bare bones system filled with Calxeda hardware.

Behind the front panel we find 24 2.5” drive bays, which can be fitted with SATA disks. If we take a look at the back, we can find a standard 750W 80 Plus Gold PSU, a serial port, and four SFP connectors. Those connectors are each capable of 10Gbit speeds, using copper and/or fiber SFP(+) transceivers.

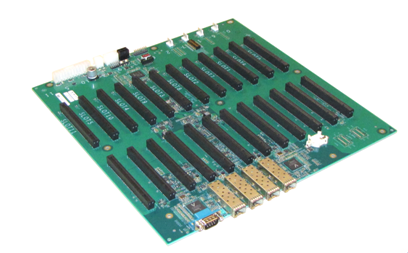

When we open up the chassis, we find somewhat less standard hardware. Mounted on the bottom is what you might call the motherboard, a large, mostly-empty PCB that contains the shared Ethernet components and a number of PCIe slots.

The 10Gb Ethernet Media Access Controller (MAC) is provided on the EnergyCore SoC, but in order to allow every node to communicate via the SFP ports, each node forwards its Ethernet traffic to one of the first four cards (the cards in slots 0-3). These nodes are connected via a XAUI interface to one of the two Vitesse VSC8488 XAUI-to-serial transceivers that in turn control two SFP modules each. Hidden behind an air duct is a Xilinx Spartan-6 FPGA, configured to act as chassis manager.

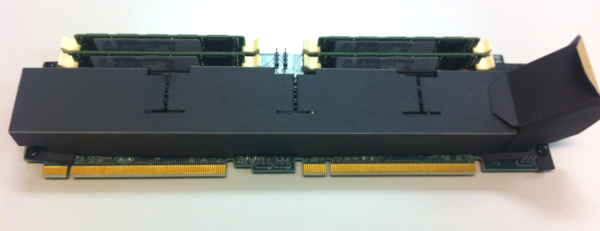

Each pair of PCIe slots contains what turns this chassis into a server cluster: an EnergyCard (EC). Each EnergyCard contains four SoCs, each with one DIMM slot. An EnergyCard contains thus four server nodes, with each node running on a quad-core ARM CPU.

The chassis can hold as many as 12 EnergyCards, so currently up to 48 server nodes. That limit is only imposed by physical space constraints, as the fabric supports up to 4096 nodes, leaving the potential for significant expansion if Calxeda maintains backwards compatibility with their existing ECs.

The system we received can only hold 6 ECs; one EnergyCard slot is lost because of the SATA cabling, giving us six ECs with four server nodes each, or 24 server nodes in total. Some creative effort has been made to provide air baffles that direct the air through the heat sinks on the ARM chips.

The air baffles are made of a finicky plastic-coated paper, glued to gether and placed on the EC with plastic nails, making it difficult to remove them from an EC by hand. Each EC can be freely placed on the motherboard, with the exception of the Slot 0 card that needs a smaller baffle.

Every EnergyCard is thus fitted with four EnergyCore SoCs, each having access to one miniDIMM slot and four SATA connectors. In our configuration each miniDIMM slot was populated with a Netlist 4GB low-voltage (1.35V instead of 1.5V) ECC PC3L-10600W-9-10-ZZ DIMM. Every SoC provided was hooked up to a Samsung 256GB SSD (MZ7PC256HAFU, comparable to Samsung’s 310 Series consumer SSDs), filling up every disk slot in the chassis. We removed those SSDs and used our iSCSI SAN to boot the server nodes. This way it was easier to compare the system's power consumption with other servers.

Previous EC versions had a microSD slot per node at the back, but in our version it has been removed. The cards are topology-agnostic; each node is able to determine where it is placed. This enables you to address and manage nodes based on their position in the system.

99 Comments

View All Comments

tuxRoller - Tuesday, March 12, 2013 - link

Why WOULD you expect DVFS to boost performance?You seem to think it slightly revelational that the scores are slightly lower (but perhaps statistically meaningless).

dig23 - Tuesday, March 12, 2013 - link

On-demand seems fair choice to me, its what best you can do on this OSes. But I will be very interested to see energy efficiency numbers when DVFS working on swarm of ARM nodes...:)tuxRoller - Tuesday, March 12, 2013 - link

It's not cpu governor I'm talking about but DVFS in particular.There's bound to be some small amount of latency involved with the process.

It's point isn't for best performance but energy efficiency thus why I made the comment in the first place.

JarredWalton - Tuesday, March 12, 2013 - link

There's the potential for DVFS to optimize for better performance on a few cores while putting some of the other cores into a lower P-state, but I think that would be more for stuff like Turbo Boost/Turbo Core. It's also possible Johan is referring to the potential for the optimizations to simply improve performance in general.CodyHall - Friday, March 15, 2013 - link

Love my job, since I've been bringing in $5600… I sit at home, music playing while I work in front of my new iMac that I got now that I'm making it online.(Click Home information)http://goo.gl/9u8us

JohanAnandtech - Wednesday, March 13, 2013 - link

Can you tell me where I got you confused? Because I write "This allowed us to make use of Dynamic Voltage and Frequency Scaling (DVFS, P-states) using the CPUfreq tool. First let's see if all these power saving tweaks have reduced the total throughput."So it should been clear that we are looking for a better performance/watt ratio. The interesting thing to note is that ARM benefits from p-states, and that Intel's excellent implementation of C-states makes p-states almost useless.

Twonky - Wednesday, March 13, 2013 - link

For information about a year ago the following post on the Linkedin ARM Based Group gave a link to a M.Sc. thesis publishing figures on the performance/watt ratio for Cortex-A8 and Cortex-A9 based boards:www.linkedin.com/groups/Single-CortexA8-CortexA9-in-comparison-85447.S.84348310

AncientWisdom - Tuesday, March 12, 2013 - link

Very interesting read, thanks!staiaoman - Tuesday, March 12, 2013 - link

Damn, Johan. As always- an incredible writeup. Interesting thought experiment to figure that an upper bound on damage to INTC server share might be found by simply looking at how much of the market is running applications like your web server here (where single-threaded performance isn't as important).Intel powering phones and ARM chips in servers...the end is nigh.

JohanAnandtech - Thursday, March 14, 2013 - link

Thanks Staiaoman :-). I'll leave the though experiment to you :-)