Intel's Xeon E5-2600 V2: 12-core Ivy Bridge EP for Servers

by Johan De Gelas on September 17, 2013 12:00 AM ESTWhat Has Improved?

Ivy Bridge is what Intel calls a tick+, a transition to the latest 22nm process technology (the famous P1270 process) with minor architectural optimizations compared to predecessor Sandy Bridge (described in detail by Anand here):

- Divider is twice as fast

- MOVs take no execution slots

- Improved prefetchers

- Improved shift/rotate and split/Load

- Buffers are dynamically allocated to threads (not statically split in two parts for each thread)

Given the changes, we should not expect a major jump in single-threaded performance. Anand made a very interesting Intel CPU generational comparison in his Haswell review, showing the IPC improvements of the Ivy Bridge core are very modest. Clock for clock, the Ivy Bridge architecture performed:

- 5% better in 7-zip (single-threaded test, integer, low IPC)

- 8% better in Cinebench (single-threaded test, mostly FP, high IPC)

- 6% better in compiling (multi-threaded, mostly integer, high IPC)

So the Ivy Bridge core improvements are pretty small, but they are measureable over very different kinds of workloads.

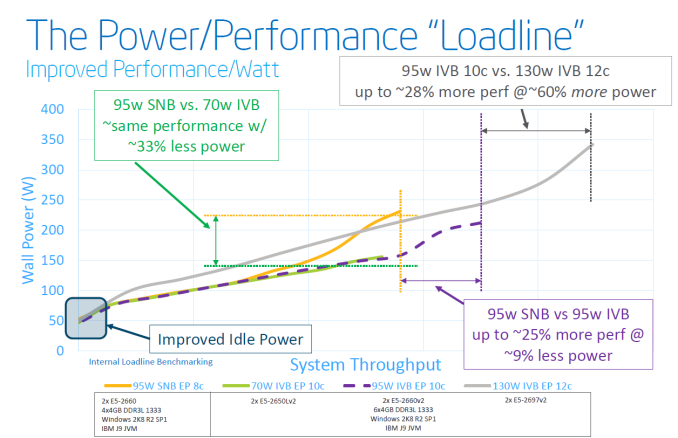

The core architecture improvements might be very modest, but that does not mean that the new Xeon E5-2600 V2 series will show insignificant improvements over the previous Xeon E5-2600. The largest improvement comes of course from the P1270 process: 22nm tri-gate (instead of 32nm planar) transistors. Discussing the actual quality of Intel process technology is beyond our expertise, but the results are tangible:

Focus on the purple text: within the same power envelope, the Ivy Bridge Xeon is capable of delivering 25% more performance while still consuming less power. In other words, the P1270 process allowed Intel to increase the number of cores and/or clock speed significantly. This can be easily demonstrated by looking at the high-end cores. An octal-core Xeon E5-2680 came with a TDP of 130W and ran at 2.7GHz. The E5-2697 runs at the same clock speed and has the same TDP label, but comes with four extra cores.

Virtualization Improvements

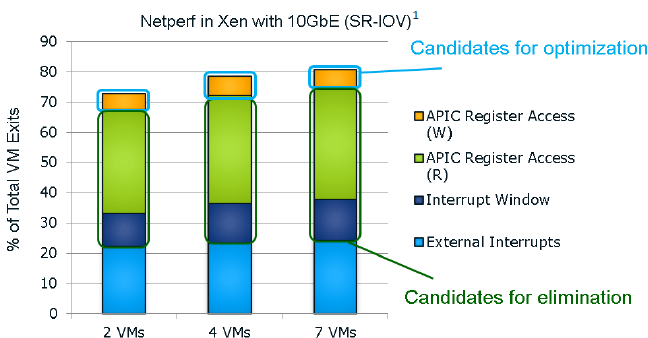

Each new generation of Xeon has reduced the amount of cycles required for a VMexit or a VMentry, but another way to reduce hardware virtualization overhead is to avoid VMexits all together. One of the major causes of VMexits (and thus also VMentries) are interrupts. With external interrupts, the guest OS has to check which interrupt has the priority and it does this by checking the APIC Task Priority Register (TPR). Intel already introduced an optimization for external interrupts in the Xeon 7400 series (back in 2008) with the Intel VT FlexPriority. By making sure a virtual copy of the APIC TPR exists, the guest OS is capable of reading out that register without a VMexit to the hypervisor.

The Ivy Bridge core is now capable of eliminating the VMexits due to "internal" interrupts, interrupts that originate from within the guest OS (for example inter-vCPU interrupts and timers). The virtual processor will then need to access the APIC registers, which will require a VMexit. Apparantly, the current Virtual Machine Monitors do not handle this very well, as they need somewhere between 2000 to 7000 cycles per exit, which is high compared to other exits.

The solution is the Advanced Programmable Interrupt Controller virtualization (APICv). The new Xeon has microcode that can be read by the Guest OS without any VMexit, though writing still causes an exit. Some tests inside the Intel labs show up to 10% better performance.

Related to this, Sandy Bridge introduced support for large pages in VT-d (faster DMA for I/O, chipset translates virtual addresses to physical), but in fact still fractioned large pages into 4KB pages. Ivy Bridge fully supports large pages in VT-d.

Only Xen 4.3 (July 2013) and KVM 1.4 (Spring 2013) support these new features. Both VMware and Microsoft are working on it, but the latest documents about vSphere 5.5 do not mention anything about APICv. AMD is working on an alternative called Advanced Virtual Interrupt Controller (AVIC). We found AVIC inside the AMD64 programmer's manual at page 504, but it is not clear which Opterons will support it (Warsaw?).

70 Comments

View All Comments

Kevin G - Tuesday, September 17, 2013 - link

Odd that Intel went the 3 die route with Ivy Bridge-EP. It was no surprise that the lowend would be a variant of the 6 core Ivy Bridge-E found in the Core i7-4900 series. Apple leaked that the line up would scale to 12 cores. The surprise is a native 10 core part and the differences between it and the 12 core design.Judging from the diagrams, Intel altered its internal ring bus for connecting cores. One ring goes orbits around all three columns of cores while another connects two columns. Thus the cores in the middle column have better latency for coherency as they have fewer stops on the ring bus to reach any core. The outer columns should have similar latency than the native 10 core chip for coherency: fewer cores to stop but longer traces on the die between columns.

Not disclosed is how the 12 core chip divides cache. Previously each core would have a 2.5 MB of L3 cache that was more local than the rest of the L3 cache. The middle column may have access to L3 cache on both sides.

The usage of dual memory controllers on the 12 core die is interesting. I wonder what measurable differences it produces. I'd fathom tests with a mix of reads/writes (ie databases) would show the greatest benefit as a concurrent read and write may occur. In a single socket configuration, enabling NUMA may produce a benefit. (Actually, how many single socket 2011 boards have this option?)

madmilk - Tuesday, September 17, 2013 - link

It looks like each ring is connected to two columns. One ring goes around all three, but does not connect to the center column.JlHADJOE - Tuesday, September 17, 2013 - link

I'm guessing the 12-core might see action in the 8P segment, which is well overdue for an update.psyq321 - Tuesday, September 17, 2013 - link

There will be 15-core E7 8xxx v2 CPUs based on the same IvyTown architecture.As Intel is not showing the die-shot of a 12 core Ivy EP, I wonder if the 15-core EX and 12-core EP are using the same 3x5 die.

Kevin G - Tuesday, September 17, 2013 - link

The memory controller interfaces are different between the Ivy Bridge-EP and Ivy Bridge-EX. The EP uses DDR3 in all of its forms (vanilla, ECC, buffered ECC, LR ECC) where as the EX version is going to use a serial interface similar in concept to FB-DIMMs. There will be two types of memory buffers for the EX line, one for DDR3 and later another that will use DDR4 memory. No changes need to be made to the new EX socket to support both types of memory.Brutalizer - Tuesday, September 17, 2013 - link

I would have expected this newest Intel 12-core cpu to perform better. For instance, in Java SPECjbb2013 benchmarks, it gets 35,500 and 4,500. However, the Oracle SPARC T5 gets 75.700 and 23.300 which totally demolishes the x86 cpu. Have not the x86 cpus improved that much in comparison to SPARC? The x86 still lags behind?https://blogs.oracle.com/BestPerf/entry/20130326_s...

JohanAnandtech - Tuesday, September 17, 2013 - link

Be careful when you compare inflated, for marketing purposes results with independent "limited optimization" results ;-)Phil_Oracle - Friday, February 21, 2014 - link

What do you mean by inflated for marketing purposes? SPECjbb2013 is clearly a real world, recent benchmark that’s full audited by all vendors on the SPEC committee. If you make such claims, surely you have some evidence?extide - Tuesday, September 17, 2013 - link

Dont forget those T5's run at TDP's in the 200-300W range... If you clocked up one of these babies to those power levels I am sure it would be >= to the T5.Kevin G - Tuesday, September 17, 2013 - link

TDP's are indeed higher on the SPARC side but not as radically as you indicate. Generally they do not consume more than 200W. (Unfortunately Oracle doesn't give a flat power consumption figure for just the CPU, this is just an estimate based upon their total system power calculator. For reference, the POWER7 is 200W and the POWER7+ is 180W.)