The Impact of Disruptive Technologies on the Professional Storage Market

by Johan De Gelas on August 5, 2013 9:00 AM EST- Posted in

- IT Computing

- SSDs

- Enterprise

- Enterprise SSDs

Introduction: Enterprise Storage 101

Since the introduction of x86 based servers at the end of the 20th century, the cost of server hardware has declined rapidly while the performance per watt and performance per dollar has increased rapidly. This pushed the server market to evolve from closed, proprietary, and most importantly extremely expensive mainframe and proprietary RISC servers into today's highly competitive x86 server market. However, the professional storage market is still ruled by the proprietary, legacy systems.

Today, you can get a very powerful server that can cope with most server workloads for something like $5000. Even better, you can run tens of workloads in parallel by virtualizing them. But go to the storage market with four or even five times the budget and you will likely return with a low end SAN.

Worse yet is that there is good chance this expensive device will choke regularly due to the use of a storage intensive application. We quote a market survey conducted in march 2013:

Forty-four percent of respondents said disproportionate storage-related costs were an obstacle preventing them from virtualizing more of their workloads. Forty-two percent said the same about performance degradation or the inability to meet performance expectations.

Note that the study does not mention the percentage of customers stuck in denial :-). The performance per dollar of the average SAN array is mediocre at best, and the storage capacity per dollar is simply awful.

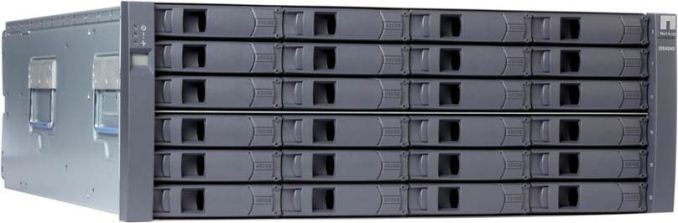

You might think that the hardware inside a SAN is vastly superior to what can be found in your average server, but that is not the case. EMC (the market leader) and others have disclosed more than once that “the goal has always to been to use as much standard, commercial, off-the-shelf hardware as we can”. So your SAN array is probably nothing more than a typical Xeon server built by Quanta with a shiny bezel. A decent professional 1TB drive costs a few hundred dollars. Place that same drive inside a SAN appliance and suddenly the price per terabyte is multiplied by at least three, sometimes even 10! When it comes to pricing and vendor lock-in you can say that storage systems are still stuck in the “mainframe era” despite the use of cheap off-the-shelf hardware.

So why do EMC, NetApp, and the other giants in the storage market charge so much for what is essentially a Xeon based server, an admittedly well designed and reliable storage backplane, and some unreliable and slow performing hard drives? The reason is not some complex market situation that can only be explained by financial experts. No, the key is simply the basic component of a storage system: the unreliable and slow magnetic disk.

As we all know, the magnetic disk is the component that fails most in the data center, and it is by far the slowest core component in modern computers. Building a reliable and somewhat performant storage system based upon such a mediocre storage component can only be done with complex software. And complex software is yet another prime reason why IT services fail. So only a few companies that were able to build a solid reputation gained enough trust to succeed in the storage market.

This is why EMC, NetApp, IBM, and HP rule the storage market today even though they are charging an arm and a leg for a few terabytes of capacity. Professional buyers trust the devices these vendors make and are willing to pay a huge premium just to be sure that they get good reliability and decent but hardly compelling performance and capacity.

60 Comments

View All Comments

Jammrock - Monday, August 5, 2013 - link

Great write up, Johan.The Fusion-IO ioDrive Octal was designed for the NSA. These babies are probably why they could spy on the entire Internet without ever running low on storage IO. Unsurprisingly that bit about the Octal being designed for the US government is no longer on their site :)

Seemone - Monday, August 5, 2013 - link

I find the lack of ZFS disturbing.Guspaz - Monday, August 5, 2013 - link

Yeah, you could probably get pretty far throwing a bunch of drives into a well configured ZFS box (striped raidz2/3? Mirrored stripes? Balance performance versus redundancy and take your pick) and throwing some enterprise SSDs in front of the array as SLOG and/or L2ARC drives.In fact, if you don't want to completely DIY, as many enterprises don't, there are companies selling enterprise solutions doing exactly this. Nexenta, for example (who also happen to be one of the lead developers behind modern opensource ZFS), sell enterprise software solutions for this. There are other companies that sell hardware solutions based on this and other software.

blak0137 - Monday, August 5, 2013 - link

Another option for this would be to go directly to Oracle with their ZFS Storage Appliances. This gives companies the very valuable benefit of having hardware and software support from the same entity. They also tend to undercut the entrenched storage vendors on price as well.davegraham - Tuesday, August 6, 2013 - link

*cough* it may be undercut on the front end but maintenance is a typical Oracle "grab you by the chestnuts" type thing.Frallan - Wednesday, August 7, 2013 - link

More like "grab you by the chestnuts - pull until they rips loose and shove em up where they don't belong" - type of thing...davegraham - Wednesday, August 7, 2013 - link

I was being nice. ;)equals42 - Saturday, August 17, 2013 - link

And perhaps lock you into Larry's platform so he can extract his tribute for Oracle software? I think I've paid for a week of vacation on Ellison's Hawaiian island.Everybody gets their money to appease shareholders somehow. Either maintenance, software, hardware or whatever.

Brutalizer - Monday, August 5, 2013 - link

Discs have grown bigger, but not faster. Also, they are not safer nor more resilient to data corruption. Large amounts of data will have data corruption. The more data, the more corruption. NetApp has some studies on this. You need new solutions that are designed from the ground up to combat data corruption. Research papers shows that ntfs, ext, etc and hardware raid are vulnerable to data corruption. Research papers also show that ZFS do protect against data corruption. You find all papers on wikipedia article on zfs, including papers from NetApp.Guspaz - Monday, August 5, 2013 - link

It's worth pointing out, though, that enterprise use of ZFS should always use ECC RAM and disk controllers that properly report when data has actually been written to the disk. For home use, neither are really required.